OpenAI Signals Imminent "Cybersecurity High" Threshold as GPT-5.2-Codex Transforms Defensive Security

Sam Altman announces upcoming releases will reach unprecedented AI cyber capability levels, introducing "defensive acceleration" strategy

January 24, 2026 | CISO Marketplace

In a significant announcement posted to X on January 23, 2026, OpenAI CEO Sam Altman revealed that the company is preparing to cross a critical threshold in AI cybersecurity capabilities. According to Altman, OpenAI will "reach the Cybersecurity High level on our preparedness framework soon," marking the first time any of their models would achieve this risk classification under their internal safety evaluation system.

The announcement comes alongside news of "exciting launches related to Codex" over the coming weeks, building on the December 2024 release of GPT-5.2-Codex—OpenAI's most advanced agentic coding model yet. For cybersecurity professionals, enterprise security teams, and CISOs evaluating AI-assisted security tooling, this development signals both substantial opportunities and new considerations for threat modeling.

Understanding What "Cybersecurity High" Actually Means

OpenAI's Preparedness Framework (updated to Version 2.0 in April 2025) serves as the company's internal system for tracking and evaluating frontier AI capabilities that could create risks of severe harm. The framework focuses on three primary tracked categories:

- Biological and Chemical capabilities — potential to reduce barriers to creating weapons

- Cybersecurity capabilities — potential for scaled cyberattacks and vulnerability exploitation

- AI Self-improvement capabilities — potential challenges for human control of AI systems

Under this framework, "High" capability represents a significant threshold—models that could "amplify existing pathways to severe harm." In cybersecurity terms, OpenAI defines High capability as models that can either:

- Develop working zero-day remote exploits against well-defended systems, OR

- Meaningfully assist with complex, stealthy enterprise or industrial intrusion operations aimed at real-world effects

The framework mandates that any system reaching High capability must have safeguards that "sufficiently minimize the associated risk of severe harm before they are deployed." This requirement becomes operationally significant: OpenAI cannot simply release a High-capability model without implementing additional protective measures.

Notably, GPT-5.2-Codex—released in December 2024—was explicitly stated to not yet reach the High threshold, though OpenAI acknowledged it represented "the strongest cybersecurity capabilities" of any model they had released to date. Altman's announcement suggests the next generation of Codex models will cross this line.

The Dual-Use Reality: Offense and Defense Share the Same Tools

Altman's post directly addressed the inherent tension in AI cybersecurity capabilities:

"Cybersecurity is tricky and inherently dual-use; we believe the best thing for the world is for security issues to get patched quickly."

This acknowledgment reflects a fundamental truth that security professionals understand well—the same knowledge, techniques, and tools that enable defensive security work can also be leveraged for offensive purposes. A model capable of identifying vulnerabilities for patching is inherently capable of identifying vulnerabilities for exploitation.

OpenAI's approach to this challenge involves what Altman described as "defensive acceleration—helping people patch bugs—as the primary mitigation." The strategy appears to rest on the premise that broadly available defensive AI capabilities will strengthen overall security posture faster than the corresponding offensive capabilities can be weaponized by threat actors.

The company plans to implement initial restrictions to "block people using our coding models to commit cybercrime," with Altman citing examples like preventing explicit requests to "hack into this bank and steal the money." However, sophisticated threat actors rarely phrase their requests so obviously, making the real challenge considerably more nuanced.

What GPT-5.2-Codex Already Delivers for Security Teams

The current GPT-5.2-Codex model provides substantial capabilities relevant to enterprise security operations. According to OpenAI's documentation and independent evaluations, the model demonstrates significant improvements across several key areas.

Vulnerability Research and Discovery

Perhaps the most compelling demonstration of these capabilities came from security researcher Andrew MacPherson at Privy. Using GPT-5.1-Codex-Max (the previous generation), MacPherson's team discovered multiple vulnerabilities in React Server Components while investigating CVE-2025-55182, a critical remote code execution vulnerability with a CVSS score of 10.0.

The AI-assisted research led to the responsible disclosure of three additional CVEs:

| CVE | Description | CVSS Score |

|---|---|---|

| CVE-2025-55182 | Critical RCE in React Server Components | 10.0 |

| CVE-2025-55183 | Source code exposure | 5.3 |

| CVE-2025-55184 | Additional vulnerability | — |

| CVE-2025-67779 | Denial of service | 7.5 |

This represents exactly the kind of defensive acceleration OpenAI envisions—security researchers using AI assistance to identify and responsibly disclose vulnerabilities before they can be exploited in the wild.

Professional-Grade Performance Metrics

On benchmark evaluations, GPT-5.2-Codex demonstrates measurable capability improvements:

| Benchmark | GPT-5.2-Codex | GPT-5.2 | GPT-5.1 |

|---|---|---|---|

| SWE-Bench Pro | 56.4% | 55.6% | 50.8% |

| Terminal-Bench 2.0 | 64.0% | 62.2% | — |

SWE-Bench Pro evaluates the ability to generate correct patches within large, unfamiliar codebases. Terminal-Bench 2.0 measures agentic behavior in live terminal environments.

Perhaps more significantly for security applications, OpenAI reports substantially higher accuracy in professional Capture-the-Flag (CTF) evaluations—multi-step, real-world challenges requiring professional-level cybersecurity skills. The company's internal evaluations show a "sharp jump in capability" with each successive Codex generation.

Long-Horizon Security Workflows

A critical advancement in GPT-5.2-Codex is its ability to maintain context through extended security workflows. Through improved "context compaction" technology, the model can now handle complex, multi-step operations without losing track of objectives or prior findings.

For security teams, this enables workflows like:

- Extended code audits across large repositories

- Comprehensive vulnerability assessments across entire codebases

- Iterative penetration testing scenarios

- Complex malware analysis requiring sustained context

The Trusted Access Pilot: Two-Tier Capability Access

Recognizing that security professionals require capabilities that could appear indistinguishable from malicious use, OpenAI is piloting an invite-only "Trusted Access" program. This program provides vetted security professionals and organizations with expanded model access for legitimate defensive activities.

According to OpenAI's documentation:

"Security teams can run into restrictions when attempting to emulate threat actors, analyze malware to support remediation, or stress test critical infrastructure. We are developing a trusted access pilot to remove that friction for qualifying users and organizations and enable trusted defenders to use frontier AI cyber capabilities to accelerate cyberdefense."

The program appears designed to provide "more permissive models" to verified defensive security practitioners, creating a two-tier system:

- General Access: Includes guardrails against obvious malicious use

- Trusted Access: Fuller capabilities for legitimate red team operations, malware analysis, and infrastructure testing

OpenAI's Required Safeguards for High-Capability Models

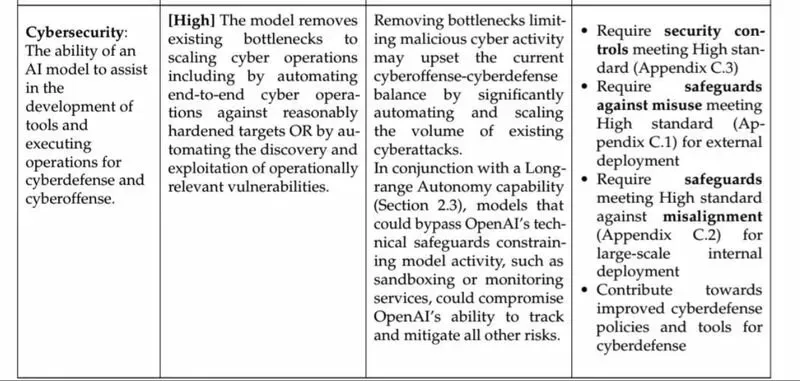

The first image you shared shows OpenAI's Preparedness Framework requirements for models reaching "High" cybersecurity capability. These requirements include:

- Security controls meeting High standard (Appendix C.3)

- Safeguards against misuse meeting High standard (Appendix C.1) for external deployment

- Safeguards against misalignment meeting High standard (Appendix C.2) for large-scale internal deployment

- Contribution towards improved cyberdefense policies and tools

The framework explicitly acknowledges the risk that removing bottlenecks limiting malicious cyber activity "may upset the current cyberoffense-cyberdefense balance by significantly automating and scaling the volume of existing cyberattacks."

Additionally, models with High cybersecurity capability combined with Long-range Autonomy capability "could bypass OpenAI's technical safeguards constraining model activity, such as sandboxing or monitoring services, could compromise OpenAI's ability to track and mitigate all other risks."

Strategic Implications for Enterprise Security

The Compression of the Vulnerability Window

Altman's emphasis on speed—"It is very important the world adopts these tools quickly to make software more secure"—reflects an emerging reality: AI-assisted vulnerability discovery will compress the window between vulnerability existence and exploitation.

Organizations that leverage these capabilities defensively will identify and patch vulnerabilities faster; those that don't will face adversaries who are.

This creates pressure for enterprise security teams to integrate AI-assisted security tooling into their vulnerability management programs, not as optional enhancement but as competitive necessity in the evolving threat landscape.

The Capability Democratization Challenge

Altman's observation that "there will be many very capable models in the world soon" points to an uncomfortable reality: even if OpenAI implements robust safeguards, the broader AI landscape will include models with varying levels of safety restrictions.

The defensive acceleration strategy only works if defenders adopt these tools faster than attackers gain access to comparable capabilities elsewhere.

For CISOs, this suggests that AI-assisted security capabilities should be evaluated based on organizational readiness to deploy them effectively, not whether such capabilities should be adopted at all. The question is becoming when and how, not if.

Threat Model Adjustments

The achievement of "Cybersecurity High" capability by any AI model should prompt threat model updates across enterprise security programs. Consider: if frontier AI models can develop working zero-day exploits against well-defended systems or assist with complex intrusion operations, security teams should model scenarios where adversaries have access to such capabilities—whether through legitimate access, jailbroken models, or alternative AI systems.

This doesn't necessarily require dramatic defensive overhauls, but it does suggest that assumptions about attacker capability levels and the sophistication required for certain attack patterns may need recalibration.

Practical Recommendations for Security Leaders

1. Evaluate Current AI Security Tooling Readiness Assess your organization's current capability to integrate AI-assisted security tools into existing workflows. This includes infrastructure considerations, team training requirements, and governance frameworks for AI-generated security findings.

2. Consider Trusted Access Programs For organizations with mature security teams conducting red team operations or malware analysis, evaluate whether participation in trusted access programs would provide meaningful capability enhancement for defensive operations.

3. Update Threat Models for AI-Assisted Adversaries Begin incorporating AI-assisted attack scenarios into threat modeling exercises, particularly for vulnerability discovery, social engineering content generation, and automated reconnaissance.

4. Accelerate Patch Management Cycles With AI-assisted vulnerability discovery compressing discovery-to-exploitation timelines, organizations should evaluate whether current patch management cycles are responsive enough for the emerging threat landscape.

5. Monitor the Multi-Model Landscape OpenAI is one of many frontier AI developers. Track capability developments across major AI labs and adjust security assumptions accordingly.

Looking Forward

OpenAI's announcement represents a significant inflection point in AI-assisted cybersecurity. The explicit acknowledgment that their models will soon reach "High" capability levels—combined with the defensive acceleration strategy and tiered access approach—signals that the company is attempting to navigate the dual-use challenge proactively rather than reactively.

Whether this approach succeeds depends significantly on adoption rates by defensive security practitioners. As Altman noted, the bet is that defensive applications of these capabilities can outpace offensive misuse. For enterprise security teams, the practical implication is clear: the AI-assisted security landscape is evolving rapidly, and organizations that position themselves to leverage these capabilities defensively will be better equipped for the threat environment that's emerging.

The coming weeks will likely bring more details about specific capabilities and access mechanisms as OpenAI rolls out its announced Codex updates. Security leaders should monitor these developments closely and begin preparing their organizations for a fundamentally different capability landscape—one where AI serves as a force multiplier for whoever deploys it first.