OWASP AI Testing Guide v1: The Industry's First Open Standard for AI Trustworthiness Testing

Game-changing release establishes practical methodology for validating AI system security, reliability, and responsible deployment

The AI security community just got its most significant resource to date. OWASP has officially released the AI Testing Guide v1, marking the first comprehensive, community-driven standard for trustworthiness testing of artificial intelligence systems. This isn't just another security framework; it's the missing link between AI governance principles and practical, repeatable testing methodologies.

Why This Matters for Security Professionals

Traditional application security testing methodologies fall short when applied to AI systems. While conventional security focuses on code vulnerabilities, unauthorized access, and system hardening, AI systems introduce an entirely new threat landscape that demands specialized testing approaches.

The OWASP AI Testing Guide addresses this gap by establishing a unified, technology-agnostic methodology that evaluates not only security threats but the broader trustworthiness properties required by responsible and regulatory-aligned AI deployments. For security professionals working in DevSecOps environments, this guide provides the structure needed to integrate AI security testing into existing pipelines.

The Unique Challenge of AI Security

AI systems fail in ways that traditional software doesn't. According to the guide, AI introduces risks that cannot be addressed with conventional security testing:

- Adversarial manipulation through prompt injection, jailbreaks, and model evasion

- Bias and fairness failures that can have real-world discriminatory impacts

- Sensitive information leakage from training data or runtime processing

- Hallucinations and misinformation that undermine system reliability

- Data and model poisoning across the entire supply chain

- Excessive or unsafe agency when AI systems have too much autonomy

- Misalignment with user intent or organizational policies

- Non-transparent outputs that can't be explained or audited

- Model drift and degradation over time

This complexity is why the industry has converged on a critical principle: security is not sufficient; AI trustworthiness is the real objective.

The Four-Layer Testing Framework

The OWASP AI Testing Guide introduces a comprehensive framework organized into four distinct testing categories, each addressing specific aspects of AI system security and trustworthiness:

1. AI Application Testing (AITG-APP)

This layer focuses on validating prompts, interfaces, and integrated logic. Key test cases include:

- AITG-APP-01: Testing for Prompt Injection

- AITG-APP-02: Testing for Indirect Prompt Injection

- AITG-APP-03: Testing for Sensitive Data Leak

- AITG-APP-04: Testing for Input Leakage

- AITG-APP-05: Testing for Unsafe Outputs

- AITG-APP-06: Testing for Agentic Behavior Limits

- AITG-APP-07: Testing for Prompt Disclosure

- AITG-APP-08: Testing for Embedding Manipulation

- AITG-APP-09: Testing for Model Extraction

- AITG-APP-10: Testing for Content Bias

- AITG-APP-11: Testing for Hallucinations

- AITG-APP-12: Testing for Toxic Output

- AITG-APP-13: Testing for Over-Reliance on AI

- AITG-APP-14: Testing for Explainability and Interpretability

For organizations building AI applications across platforms, these tests are essential. Whether you're developing mobile applications with AI features or integrating AI into containerized environments, understanding application-layer vulnerabilities is critical.

2. AI Model Testing (AITG-MOD)

This category probes model robustness, alignment, and adversarial resistance:

- AITG-MOD-01: Testing for Evasion Attacks

- AITG-MOD-02: Testing for Runtime Model Poisoning

- AITG-MOD-03: Testing for Poisoned Training Sets

- AITG-MOD-04: Testing for Membership Inference

- AITG-MOD-05: Testing for Inversion Attacks

- AITG-MOD-06: Testing for Robustness to New Data

- AITG-MOD-07: Testing for Goal Alignment

Model-level testing addresses fundamental vulnerabilities in the AI system's core intelligence. These tests evaluate whether your models can withstand sophisticated attacks designed to manipulate their behavior or extract sensitive information.

3. AI Infrastructure Testing (AITG-INF)

Evaluating pipeline, orchestration, and runtime environments:

- AITG-INF-01: Testing for Supply Chain Tampering

- AITG-INF-02: Testing for Resource Exhaustion

- AITG-INF-03: Testing for Plugin Boundary Violations

- AITG-INF-04: Testing for Capability Misuse

- AITG-INF-05: Testing for Fine-tuning Poisoning

- AITG-INF-06: Testing for Dev-Time Model Theft

Infrastructure testing is particularly relevant for teams working with cloud-native AI deployments and containerized ML pipelines. Understanding infrastructure vulnerabilities is essential for maintaining secure AI operations at scale.

4. AI Data Testing (AITG-DAT)

Assessing data integrity, privacy, and provenance:

- AITG-DAT-01: Testing for Training Data Exposure

- AITG-DAT-02: Testing for Runtime Exfiltration

- AITG-DAT-03: Testing for Dataset Diversity & Coverage

- AITG-DAT-04: Testing for Harmful Data

- AITG-DAT-05: Testing for Data Minimization & Consent

Data testing addresses privacy concerns and regulatory requirements. Organizations conducting AI risk assessments need robust data testing methodologies to ensure compliance with privacy regulations and ethical AI principles.

Standardized Testing Methodology

Each test case in the OWASP AI Testing Guide follows a consistent, repeatable process:

Define Objective → Execute Test → Interpret Response → Recommend Remediation

This structured approach enables consistent assessment and evidence-based reporting across teams and organizations. Rather than prescribing specific tools, the guide defines a standard methodology—a common language for measuring the resilience of AI systems.

Addressing Compliance and Risk Management

The guide is designed to serve as a comprehensive reference for software developers, architects, data analysts, researchers, auditors, and risk officers. By following this guidance, teams can establish the level of trust required to confidently deploy AI systems into production with verifiable assurances.

For organizations navigating the complex landscape of AI compliance requirements, this guide provides the technical foundation for meeting regulatory obligations. The testing framework directly supports compliance with emerging AI regulations by providing concrete, auditable test procedures.

The guide also addresses critical AI privacy concerns through dedicated data testing categories that evaluate training data exposure, runtime exfiltration, and data minimization practices.

Integration with Existing Security Frameworks

The OWASP AI Testing Guide builds on established security principles while introducing AI-specific considerations. It complements existing OWASP resources, including the Top 10 for Large Language Model Applications 2025 and the GenAI Red Teaming Guide.

The framework is also aligned with:

- NIST AI Risk Management Framework (AI RMF)

- NIST AI 100-2e standards

- Google's Secure AI Framework (SAIF)

- NIST Adversarial Machine Learning Taxonomy

This alignment ensures that organizations can integrate AI testing into their existing security programs without creating isolated processes or duplicate efforts.

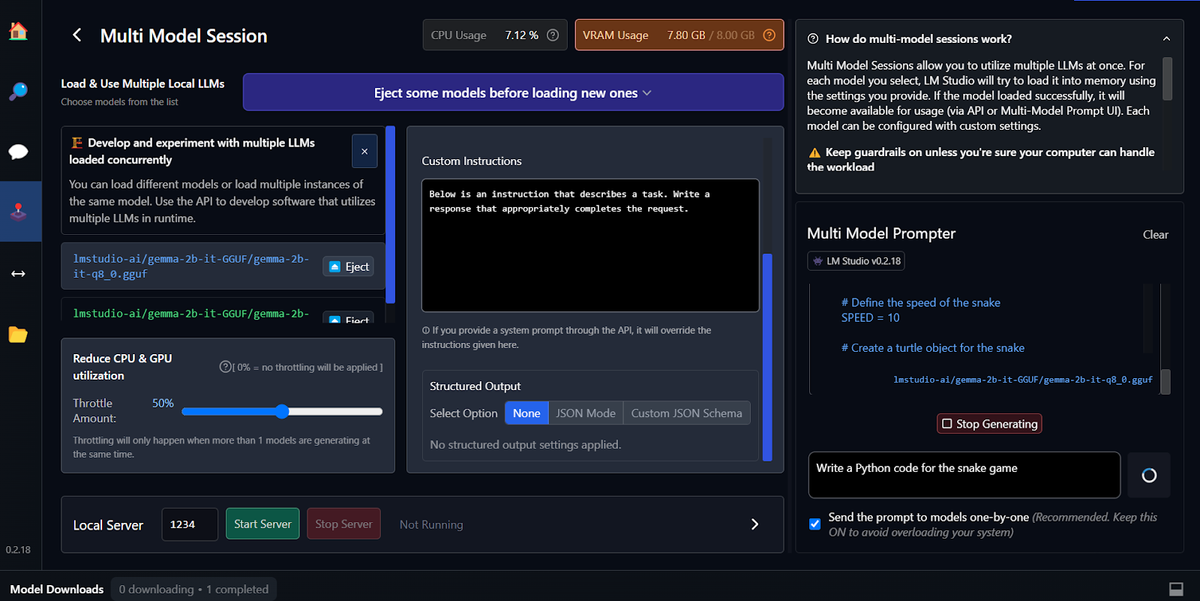

Practical Implementation

The guide is explicitly written for practitioners who must translate high-level AI governance principles into practical, testable controls. Each test case links objectives, payloads, and observable responses to remediation guidance.

Key features include:

Technology-Agnostic Approach: The methodology applies regardless of specific AI frameworks, models, or deployment platforms.

Evidence-Based Testing: Test procedures are grounded in real attack patterns documented by the security community and academic research.

Continuous Evolution: The framework is designed to evolve based on community feedback, real-world testing experiences, and emerging threats.

Multidisciplinary Focus: Testing covers security, reliability, fairness, privacy, and alignment—recognizing that AI trustworthiness requires more than traditional security controls.

Who Should Use This Guide

The OWASP AI Testing Guide is essential for:

- AI/ML Engineers building and deploying AI systems

- Security Teams responsible for validating AI security controls

- Risk Managers assessing AI-related organizational risks

- Compliance Officers ensuring regulatory alignment

- Auditors evaluating AI system trustworthiness

- DevSecOps Teams integrating AI security into CI/CD pipelines

- Product Managers making informed decisions about AI deployment risks

Moving Forward: Community-Driven Evolution

Version 1.0 represents the foundation, but the OWASP AI Testing Guide is designed for continuous improvement. The project team actively welcomes community contributions through GitHub issues, pull requests, and community discussions.

As AI systems continue to evolve and new attack vectors emerge, the guide will adapt to maintain relevance and effectiveness. This community-driven approach ensures that the testing methodology remains current with the rapidly changing AI security landscape.

Building Trustworthy AI Systems

The release of the OWASP AI Testing Guide v1 represents a critical milestone in the maturation of AI security practices. For the first time, organizations have access to a comprehensive, open-source standard for systematically evaluating AI system trustworthiness.

By providing a structured methodology that addresses security, privacy, fairness, reliability, and alignment, the guide enables organizations to move beyond ad-hoc security practices toward rigorous, repeatable testing processes. This standardization is essential for building the trust required to deploy AI systems in high-stakes environments.

Whether you're developing AI-powered applications, deploying machine learning models in production, or assessing organizational AI risks, the OWASP AI Testing Guide provides the practical framework needed to validate that your AI systems behave safely and as intended.

The guide is available now under the CC BY-SA 4.0 license, ensuring broad accessibility for organizations of all sizes. For security professionals committed to responsible AI deployment, this release provides the tools and methodology needed to make AI trustworthy by design.

Resources:

- OWASP AI Testing Guide v1 Official Release

- DevSecOps Implementation Strategies

- Cloud-Native Security Best Practices

- Container Security for AI Workloads

- Mobile AI Security Considerations

- AI Risk Assessment Framework

- AI Privacy and Data Protection

- AI Compliance Requirements

The OWASP AI Testing Guide represents the collective expertise of the global AI security community. By adopting these testing standards, organizations can build AI systems that are not just functional, but fundamentally trustworthy.