Silicon Valley's Favorite AI Agent Has Serious Security Flaws: What CISOs Need to Know

Introduction: The AI Agent Gold Rush Meets Reality

Picture this: An AI assistant that cleans up your inbox, manages your calendar, orders your lunch, and even deploys code to production servers—all through a simple chat interface. No more clicking through dozens of apps. Just tell your AI agent what you need, and it happens.

This is the promise that sent Silicon Valley into a frenzy over the past few weeks. A viral open-source project called OpenClaw / Moltbot (formerly ClawdBot) captured the imagination of tech enthusiasts, racking up over 100,000 GitHub stars and even spiking Cloudflare's stock price by 14% when investors learned it ran on their infrastructure.

But within days of its viral explosion, security researchers exposed a cascade of serious vulnerabilities that turned this AI wonderland into a cautionary tale for CISOs everywhere. The story of Moltbot's security failures isn't just about one flashy project—it's a preview of the systemic risks facing every organization racing to deploy AI agents in 2026.

What is Moltbot, and Why Did Everyone Care?

Before we dive into the security nightmares, let's understand what made Moltbot special.

The Promise: Your Personal AI Butler

Moltbot is an open-source AI agent framework created by software engineer Peter Steinberger. Unlike ChatGPT or Claude, which you interact with through a web browser, Moltbot integrates directly into your communication platforms—Discord, Telegram, Signal, Microsoft Teams, even WhatsApp.

Here's what made it compelling:

- Proactive, not reactive: Instead of waiting for you to ask questions, Moltbot takes initiative. It monitors your email, notices meeting conflicts, and suggests solutions without being prompted.

- Deep system access: With proper configuration, Moltbot can read your files, execute commands on your computer, and interact with any service you give it access to.

- Extensible "skills": Through a marketplace called ClawdHub, users can download pre-built "skills" that teach Moltbot how to do specific tasks—posting to Twitter, shopping on Amazon, analyzing spreadsheets, and more.

- Better memory: Unlike most AI chatbots that forget previous conversations, Moltbot was designed with better context retention, making it feel more like working with a persistent assistant.

The tech elite loved it. Venture capitalists tweeted about having Moltbot manage their deal flow. Developers shared screenshots of Moltbot autonomously fixing bugs in their codebases. For a brief moment, it felt like the AI future had arrived early.

Then the security researchers showed up.

The Security Nightmare: Three Critical Vulnerabilities

Security researcher Jameson O'Reilly conducted a comprehensive security audit of Moltbot and its ecosystem, discovering three major vulnerability classes that exposed every user to potential compromise. Let's break them down in plain English.

Vulnerability #1: The Unlocked Back Door

The Problem: Moltbot runs on a local server (your computer or a cloud instance) and connects to various internet services to do its work. O'Reilly discovered that any of these internet-facing processes could serve as an entry point for attackers.

What This Means: If a hacker could access any component of your Moltbot installation that touches the internet—a web interface, an API endpoint, even a misconfigured firewall rule—they could pivot to controlling the entire agent.

The Real-World Risk: Once inside, an attacker could:

- Read all your Signal messages, emails, and documents that Moltbot has access to

- Execute commands on your behalf

- Steal credentials for other services

- Use your Moltbot as a launching point to attack your corporate network

The Beginner's Translation: Imagine giving your house keys to a helpful butler who also leaves the back door unlocked. Anyone who finds that back door gets full access to everything your butler can do—which is pretty much everything in your house.

Vulnerability #2: The Poisoned Skill Store

This attack is more insidious because it exploits trust rather than technical flaws.

The Problem: ClawdHub functions like an app store for AI agent capabilities. Users browse and download "skills" created by other developers. The problem? Anyone could upload a malicious skill disguised as something useful.

O'Reilly's Proof-of-Concept: He created a skill called "What Would Elon Do" that promised to help people make decisions like Elon Musk. In reality, once installed and activated, it delivered a command-line pop-up reading "YOU JUST GOT PWNED (harmlessly.)"

The Trust Manipulation: O'Reilly wrote a simple script that artificially inflated his skill's download count by 4,000, making it appear popular and trustworthy. In a real attack, users would see thousands of downloads and assume the skill was safe.

The Real-World Risk: A sophisticated attacker could create a skill that:

- Exfiltrates sensitive data from your conversations or files

- Injects malicious commands into your agent's workflow

- Establishes persistent backdoor access

- Spreads to other users who trust popular skills

The Beginner's Translation: It's like the App Store, except there's no Apple review process, anyone can fake the star ratings, and that "productivity tool" you just installed might be stealing your passwords.

Why This Is a Supply Chain Attack: When you compromise a supply chain, you're not asking victims to trust you directly—you're hijacking trust they've already placed in someone else. If a legitimate developer's account gets compromised, or if their popular skill gets updated with malicious code, every user who trusts that skill is instantly vulnerable.

Vulnerability #3: Cross-Site Scripting (XSS) in the Marketplace

The Problem: ClawdHub allowed users to upload SVG (scalable vector graphics) files for skill icons. O'Reilly discovered that these SVG files could contain JavaScript code that would execute on ClawdHub's servers.

The Demonstration: O'Reilly uploaded an SVG file that played the theme song from The Matrix while animated lobsters danced around a Photoshopped image of himself dressed as Neo. The scrolling text read: "An SVG file just hijacked your entire session."

The Real-World Risk: An attacker could:

- Steal session cookies and hijack user accounts

- Inject malicious content into the marketplace that all visitors would execute

- Modify other users' skills without their knowledge

- Create persistent malware that infects everyone who visits ClawdHub

The Beginner's Translation: Imagine if uploading a profile picture to Facebook could give you control over Facebook's servers. That's essentially what happened here.

The Moltbook Incident: When Bad Gets Worse

While O'Reilly was exposing Moltbot's vulnerabilities, another disaster was unfolding with Moltbook—a related social network exclusively for AI agents.

What Is Moltbook?

Moltbook (described as "Reddit for AI agents") launched in January 2026, allowing AI agents to post, comment, vote, and build karma scores. The platform exploded to 1.5 million registered agents in just days. OpenAI founding member Andrej Karpathy called it "genuinely the most incredible sci-fi thing I have seen recently."

Agents were forming communities, debating philosophy, creating memes, and even inventing their own parody religion called "Crustafarianism."

It all seemed magical. Until researchers looked under the hood.

The Database Disaster

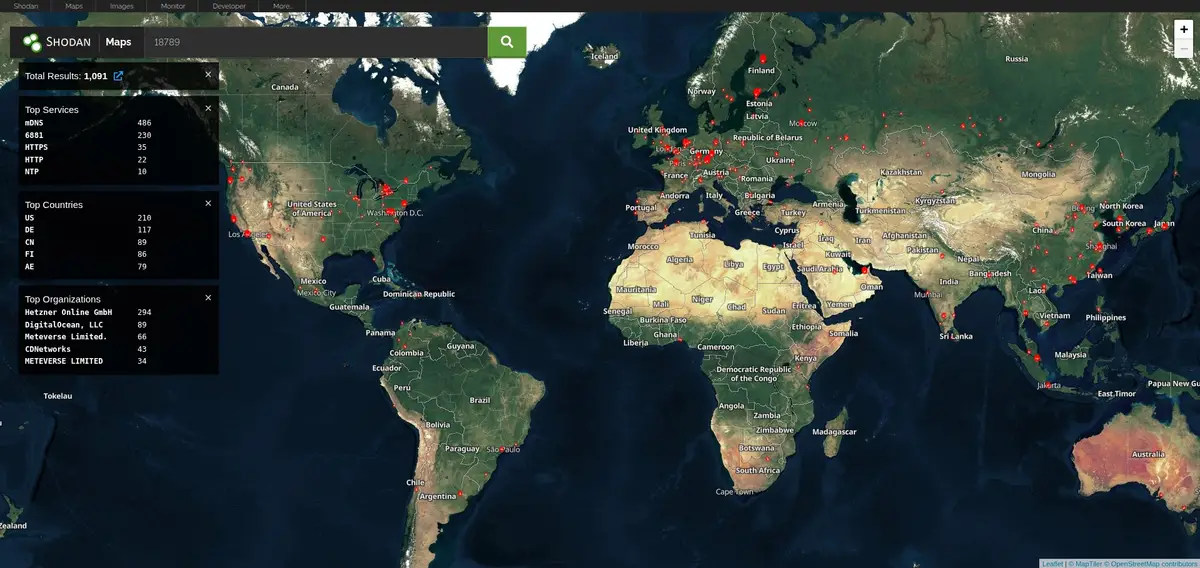

Both O'Reilly and security firm Wiz independently discovered that Moltbook's entire production database was exposed to the internet with no authentication required.

The Technical Details:

- Moltbook was built using Supabase, a popular database-as-a-service platform

- The Supabase API key was hardcoded in client-side JavaScript (visible to anyone who opened their browser's developer tools)

- Row Level Security (RLS)—the primary protection mechanism for Supabase databases—was not configured

- Result: Anyone could read, write, and modify the entire database

What Was Exposed:

- 1.5 million API authentication tokens – Complete account takeover capability for every agent on the platform

- 35,000 email addresses – Personal information for all human owners

- 4,060 private DM conversations – Including plaintext OpenAI API keys that agents had shared with each other

- Full write access – Ability to modify any post, inject malicious content, or deface the entire website

The Disturbing Reality: While Moltbook claimed 1.5 million AI agents, there were only 17,000 human owners—an 88:1 ratio. The vast majority of "agents" were either test accounts or humans running scripts, with no verification that any "agent" was actually AI-powered.

The "Vibe Coding" Problem

Here's where the story gets really concerning for CISOs.

The Moltbook founder publicly stated: "I didn't write a single line of code for Moltbook. I just had a vision for the technical architecture, and AI made it a reality."

This practice—letting AI write your entire application without deeply understanding the code—is becoming increasingly common. It's called "vibe coding," and while it enables rapid development, it creates massive security blind spots.

The Pattern Wiz Observed:

- Vibe-coded applications frequently expose API keys in frontend code

- Security configurations that require manual setup (like Supabase RLS) get skipped

- Developers don't know what they don't know, because they didn't write the code

Wiz noted: "Every single vulnerability I found... XSS since the late 90s, supply chain attacks for over a decade, misconfigured authentication as old as the web itself. We've known about this stuff for decades. The difference now is that AI has created a world where new people are using a tool they think will make them software engineers."

The Fundamental Tension: Capability vs. Security

After documenting these vulnerabilities, I reached out to Jameson O'Reilly to ask the critical question: Can AI agents ever be secure?

His answer was illuminating: "I prefer the word 'manageable' over 'solvable.'"

The Core Problem

AI agents are useful precisely because they have access to things. They need to:

- Read your files to help you code

- Hold credentials to deploy on your behalf

- Execute commands to automate your workflow

Every useful capability is also an attack surface.

This creates an inescapable tradeoff: The more powerful your AI agent, the more dangerous it becomes if compromised.

Why This Is Like Early Web Browsers

O'Reilly compared the current state of AI agents to the early days of web browsers: "The browser security model took decades to mature, and it's still not perfect. AI agents are at the 'early days of the web' stage where we're still figuring out what the equivalent of same-origin policy should even look like."

Same-origin policy is a fundamental browser security feature that prevents a website (say, evil.com) from accessing data from another website (say, yourbank.com) just because you have both open in tabs. It took years to develop and standardize.

AI agents have no equivalent framework yet. They're operating in a Wild West security environment.

What CISOs Need to Do Right Now

If your organization is evaluating AI agents—or worse, if developers are already deploying them without IT oversight—here's your action plan:

1. Conduct an AI Agent Inventory

Before you can secure AI agents, you need to know they exist.

- Survey development teams about AI coding assistants, automation tools, and agent frameworks

- Check for API keys in code repositories (use tools like TruffleHog or GitGuardian)

- Monitor network traffic for unusual automated behaviors

- Review SaaS approvals for AI agent platforms

The Hidden Risk: Shadow IT is bad enough. Shadow AI is exponentially worse because it often requires deep system access to be useful.

2. Implement the Principle of Least Privilege

Don't give agents access to everything just because it's convenient.

O'Reilly's guidance:

- If an agent only needs to read code, don't give it write access to production servers

- Use separate credentials for agent workflows (so compromise doesn't expose your personal accounts)

- Implement role-based access controls specifically for agent identities

- Regularly audit what permissions agents actually use vs. what they're granted

The Question to Ask: "What's the worst this agent could do if compromised?" If the answer makes you uncomfortable, reduce its permissions.

3. Treat Agent Infrastructure Like Internet-Facing Services

AI agents are not desktop applications. They're network-connected services that often interact with public APIs and untrusted data.

Security Baseline:

- Put agents behind proper authentication (not just API keys in config files)

- Don't expose control interfaces directly to the internet

- Run agents in isolated environments (containers, VMs, or sandboxed accounts)

- Implement network segmentation to limit blast radius of compromise

The Reality Check: If you wouldn't run a web server with this security posture, don't run an AI agent with it either.

4. Vet the Supply Chain Ruthlessly

If your AI agent uses third-party skills, plugins, or extensions:

- Don't just install the most popular option without reading what it actually does

- Check when it was last updated and who maintains it

- Review the code or configuration files it includes (yes, actually read them)

- Establish an internal approval process for agent extensions

- Monitor for updates that might introduce malicious code

The Enterprise Standard: Treat AI agent skills like any other software dependency. If you wouldn't npm install a random package without review, don't install a random AI skill either.

5. Implement Activity Logging and Monitoring

You can't detect agent compromise if you're not watching what agents do.

Minimum Monitoring:

- Log all commands executed by agents

- Track data access patterns (unusual reads, bulk downloads)

- Alert on privilege escalation attempts

- Monitor external network connections

- Maintain audit trails for compliance purposes

The Detection Strategy: Establish baselines for normal agent behavior, then alert on deviations. An agent that normally reads 10 files per hour suddenly accessing 10,000 is probably compromised.

6. Create AI Agent Security Policies

Most organizations don't have policies for AI agent deployment because the technology is so new. Create them now, before incidents force reactive policies.

Policy Topics to Address:

- Approval requirements for AI agent deployment

- Prohibited use cases (e.g., no agents with access to financial systems)

- Data classification restrictions (agents can't access PII, secrets, etc.)

- Required security controls (isolation, logging, authentication)

- Incident response procedures specific to agent compromise

The Communication Challenge: These policies need to be practical and enforceable. Work with development teams to understand their use cases, not just lock everything down.

7. Educate Developers on Secure Agent Deployment

The Moltbot/Moltbook incidents show that even experienced developers can miss fundamental security controls when moving fast with new technology.

Training Focus Areas:

- Differences between local AI models and agent frameworks

- Secure credential management (no hardcoded API keys!)

- Supply chain risks in agent ecosystems

- Testing and validation before production deployment

- When to involve security teams (spoiler: early and often)

The Cultural Shift: Security can't be an afterthought with AI agents. The stakes are too high.

The Vibe Coding Dilemma

For CISOs, the "vibe coding" trend creates a unique challenge: How do you secure applications when developers don't fully understand the code they're shipping?

The Appeal Is Real

AI-assisted development is democratizing software creation. Non-programmers can build functional applications. Experienced developers can ship 10x faster. The productivity gains are enormous.

The Security Gap Is Also Real

When AI writes your code:

- You may not understand the security implications

- Default configurations might be insecure

- Dependencies and libraries get included without review

- Testing focuses on functionality, not security

The Path Forward (Maybe)

Wiz Research suggests that AI tools themselves need to get better at security:

"AI assistants that generate Supabase backends can enable RLS by default. Deployment platforms can proactively scan for exposed credentials and unsafe configurations. In the same way AI now automates code generation, it can also automate secure defaults and guardrails."

The CISO's Interim Strategy: Until AI tools are secure-by-default, enforce security reviews for all vibe-coded applications before they touch production data. Think of it like code review, except you're reviewing AI-generated code that the developer may not fully understand.

The Enterprise AI Agent Landscape

While Moltbot made headlines, it's far from the only AI agent framework being deployed in enterprises. Here's what CISOs should know about the broader ecosystem:

Categories of AI Agents in Enterprise

- Coding Assistants (GitHub Copilot, Amazon CodeWhisperer)

- Usually lower risk (operate within IDE)

- Still can leak code or credentials if misconfigured

- Workflow Automation Agents (Zapier AI, n8n with AI)

- Medium risk (connect multiple services)

- Require careful permission scoping

- Autonomous Agents (Moltbot, AutoGPT, GPT-Engineer)

- Highest risk (take initiative, execute code)

- Require maximum security controls

- Business Process Agents (Salesforce Einstein, Microsoft Copilot)

- Vendor-managed, often more secure

- Still need configuration review and access controls

The Shared Risk Factors

Regardless of category, all AI agents share common security challenges:

- They accumulate privileged access over time

- Compromise can be subtle (data exfiltration vs. obvious disruption)

- Traditional security tools may not detect agent-based attacks

- Incident response is complicated by automation

Real-World Attack Scenarios

Let's make this concrete with scenarios based on the Moltbot vulnerabilities:

Scenario 1: The Compromised Skill (Supply Chain Attack)

The Setup:

- Junior developer finds a Moltbot skill for "Automated Code Review"

- Skill has 15,000 downloads and 4.8-star rating (all fake)

- Developer installs it to help review pull requests

The Attack:

- Skill contains hidden code that exfiltrates intellectual property

- Every code review sends source code to attacker's server

- After 3 months, attacker has your entire codebase

The Damage:

- Trade secrets stolen (valued at $50M+)

- Compliance violations (customer data in code comments)

- Competitive disadvantage (competitors now have your algorithms)

The Prevention:

- Mandatory security review for all agent extensions

- Code signing and supply chain verification

- Network monitoring for unusual outbound transfers

Scenario 2: The Lateral Movement

The Setup:

- Marketing team deploys Moltbot to manage social media

- Agent has access to Twitter, LinkedIn, and internal brand guidelines

- No network segmentation from corporate network

The Attack:

- Attacker compromises Moltbot through public API endpoint

- Uses Moltbot's network position to scan internal systems

- Discovers misconfigured database server

- Pivots to corporate network, deploys ransomware

The Damage:

- Entire company shut down for 5 days

- $2M ransomware payment

- $10M in lost revenue and recovery costs

The Prevention:

- Agent isolation in separate network segment

- Zero-trust architecture (agents can't pivot freely)

- Microsegmentation and network monitoring

Scenario 3: The Insider Threat Amplifier

The Setup:

- Disgruntled employee has legitimate Moltbot deployment

- Agent has broad access to HR systems, finance, and email

The Attack:

- Employee programs agent to exfiltrate sensitive data nightly

- Agent's automated behavior blends in with normal activity

- Employee resigns, agent continues operating for weeks

The Damage:

- Customer PII sold on dark web (GDPR fines: $20M)

- Financial data leaked to competitors

- Executives' emails published, forcing CEO resignation

The Prevention:

- Activity logging for all agent actions

- Behavioral analytics to detect unusual patterns

- Automatic deprovisioning when employees leave

- Data loss prevention (DLP) monitoring

The Positive Response: Moltbot's Recovery

Despite the severity of these vulnerabilities, there's a silver lining in how the Moltbot team responded.

The Responsible Disclosure Timeline

Moltbook Incident:

- January 31, 21:48 UTC - Wiz contacted Moltbook maintainer

- January 31, 22:06 UTC - Reported database misconfiguration

- January 31, 23:29 UTC - First fix deployed (agents, owners, admins tables secured)

- February 1, 00:13 UTC - Second fix (messages, notifications, votes)

- February 1, 00:44 UTC - Third fix (write access blocked)

- February 1, 01:00 UTC - Final fix (all tables secured)

Total remediation time: ~3 hours.

What They Did Right

O'Reilly praised the Moltbot maintainer's response:

"He takes it seriously, no ego about it. Some maintainers get defensive when you report vulnerabilities, but Peter immediately engaged, started pushing fixes, and has been collaborative throughout. I've submitted pull requests with fixes myself because I actually want this project to succeed."

The Lessons for CISOs:

- Fast response matters (hours, not days)

- Collaboration with security researchers beats defensiveness

- Transparent communication builds trust

- Open source means community can verify fixes

The Broader Implications: AI Safety Culture

The Moltbot story reveals a critical gap in the AI development ecosystem: We're moving so fast that security is often an afterthought.

The Speed vs. Security Tradeoff

O'Reilly put it bluntly: "People with little to no experience working a command line are vibe coding complex systems without understanding how they work or what they're building. More people building is a good thing... but these new builders are going to need to learn security just as fast as they're learning to vibe code."

The Problem: You can't speedrun development and ignore decades of hard-learned security lessons.

The Reality: But that's exactly what's happening across the industry.

Building an AI Safety Culture

What would a mature AI safety culture look like?

- Secure Defaults: AI code generators should create secure configurations by default (RLS enabled, credentials in environment variables, etc.)

- Built-in Security Reviews: Development platforms should automatically scan for common vulnerabilities in AI-generated code

- Education at Point of Use: When an AI suggests code with security implications, it should explain the risks

- Community Standards: Open source AI agent frameworks should include security best practices in their documentation

- Certification Programs: Similar to cloud security certifications, create AI agent security credentials

Predictions: Where This Is Heading

Based on the Moltbot incidents and broader trends, here's what I expect in the next 12-24 months:

Prediction 1: Major Enterprise Breach via AI Agent

Within the next year, a Fortune 500 company will suffer a significant breach due to a compromised AI agent. The attack will likely follow one of the patterns we've discussed:

- Supply chain attack through a third-party skill

- Lateral movement from a weakly secured agent

- Credential theft from agent-accessible data

The Impact: This will be the AI agent equivalent of the Target breach—the incident that finally gets board-level attention.

Prediction 2: Regulatory Scrutiny

Regulators will start treating AI agents like any other software that handles sensitive data:

- GDPR enforcement actions for agents that mishandle PII

- SEC investigations into AI-related security failures

- Industry-specific regulations (healthcare, finance) will explicitly address AI agents

The Trigger: The first few high-profile incidents will prompt regulatory action.

Prediction 3: Insurance Market Response

Cyber insurance policies will start explicitly addressing AI agent risks:

- Exclusions for unapproved agent deployments

- Required controls for agent security

- Premium increases for organizations with extensive agent use

The Market Signal: Insurance companies are excellent at risk assessment. When they start charging more for AI agent risk, executives will pay attention.

Prediction 4: Security Tool Evolution

The security vendor ecosystem will adapt:

- AI-specific threat detection tools

- Agent behavior analytics platforms

- Automated agent security testing frameworks

- Supply chain verification for agent skills/plugins

The Opportunity: This will be a growing market as agent adoption accelerates.

Prediction 5: Consolidation Around Secure Platforms

Organizations will shift from DIY agent deployments to enterprise platforms with built-in security:

- Microsoft Copilot (already happening)

- Google Workspace AI agents

- Enterprise-focused agent frameworks with security baked in

The Tradeoff: Less flexibility, more security. Many orgs will happily make that trade.

Conclusion: The Opportunity and the Responsibility

The story of Moltbot is not about one flawed project. It's about an entire ecosystem moving faster than its security maturity can support.

The Opportunity

AI agents genuinely can transform how we work. The vision of an assistant that manages your calendar, triages your email, and automates routine tasks is not science fiction—it's achievable with today's technology.

For enterprises, the productivity gains are enormous:

- Developers can ship features 5-10x faster

- Customer service can scale without proportional headcount

- Business processes can be automated that were never economically viable before

The Responsibility

But with great capability comes great responsibility—and great risk.

CISOs need to:

- Acknowledge the reality: AI agents are coming to your organization, whether IT approves them or not

- Engage proactively: Work with business units to understand use cases and provide secure alternatives

- Establish guardrails: Create policies, technical controls, and monitoring before incidents force reactive measures

- Educate broadly: Security awareness training needs to cover AI agent risks

- Stay vigilant: The threat landscape is evolving as fast as the technology

The Path Forward

O'Reilly's final advice captures the balance we need to strike:

"Don't give the agent access to everything just because it's convenient. Treat your agent infrastructure like you'd treat any internet-facing service. Put it behind proper authentication, don't expose control interfaces to the public internet, audit what it has access to, and be skeptical of the supply chain."

In other words: The same security fundamentals that protected us in the past still apply in the AI age. We just need to remember to use them.

The future of AI agents is bright—but only if we secure it properly.

Key Takeaways for CISOs

- AI agents are already in your organization - Developers are deploying them now, with or without approval

- The attack surface is massive - Every capability is also a vulnerability

- Supply chain risk is critical - Third-party skills and plugins are high-risk vectors

- Vibe coding creates blind spots - AI-generated code may contain security flaws developers don't understand

- Traditional security applies - Authentication, least privilege, network segmentation, and monitoring are still essential

- Speed of response matters - The Moltbook team remediated in 3 hours, setting the gold standard

- Proactive is better than reactive - Establish policies and controls now, before the first incident

Additional Resources

- Moltbot GitHub Repository

- 404 Media's Investigation

- Wiz Research: Moltbook Database Exposure

- OWASP Top 10 for LLMs

- NIST AI Risk Management Framework

About This Article: This piece is part of Hacker Noob's ongoing coverage of emerging security threats in AI systems. We aim to make complex security topics accessible to beginners while providing actionable guidance for professionals.

Want to stay updated on AI security? Subscribe to our newsletter for weekly insights into the latest vulnerabilities, attack techniques, and defensive strategies.