The AI Governance Maturity Gap: Why Most Security Teams Are Behind

Artificial intelligence is moving faster than security governance frameworks can adapt.

Organizations are deploying large language models into workflows, automating decision chains, and integrating AI into customer-facing systems — often without fully understanding the new attack surface they are creating.

The result isn’t just technical risk.

It’s governance risk.

And most security teams are behind.

AI Defense in Action – Feb 21

40% discount code: CISOMP40

Adoption Is Accelerating. Oversight Is Not.

In many organizations, AI adoption begins as experimentation:

- Productivity copilots

- Internal chatbots

- Automated analysis pipelines

- AI-assisted code generation

- AI-powered customer interactions

What begins as experimentation often becomes embedded infrastructure.

But governance rarely scales at the same speed.

Few teams have:

- Formal AI-specific threat models

- Adversarial testing processes

- Model access control policies

- Data lineage tracking for model inputs and outputs

- Executive-level accountability structures

This gap between deployment and governance is where real risk accumulates.

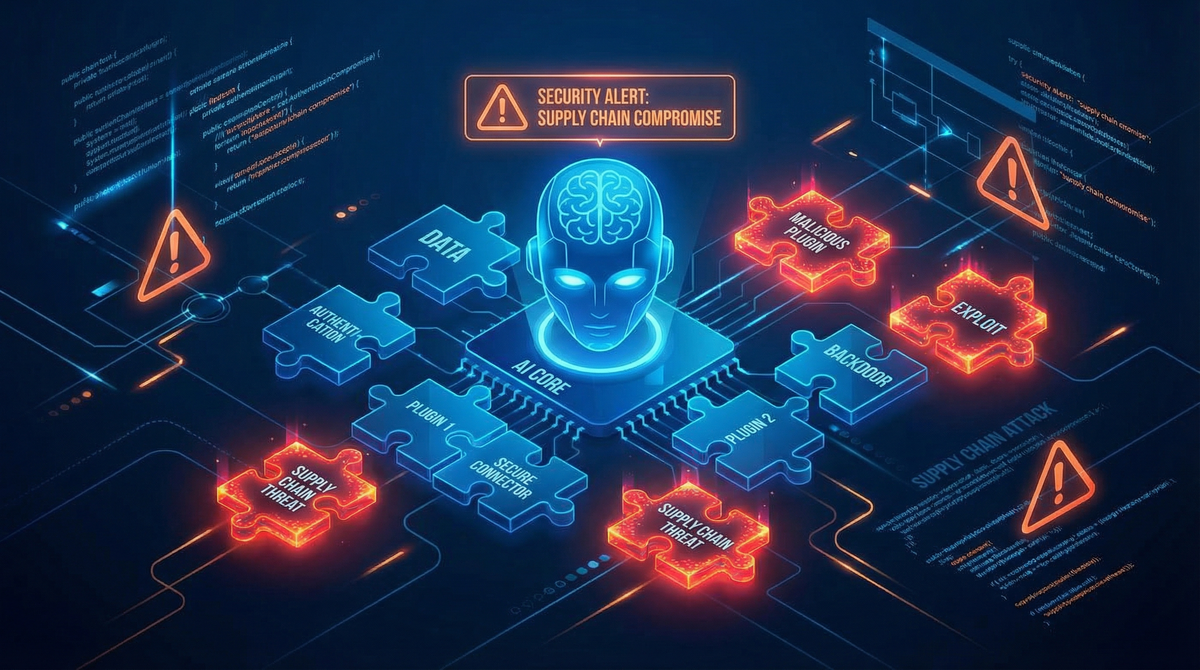

AI Expands the Attack Surface in New Ways

Traditional security programs were designed around:

- Networks

- Endpoints

- Identity systems

- Applications

- Cloud infrastructure

AI introduces entirely different classes of risk.

1. Prompt Injection & Output Manipulation

Large language models can be manipulated through crafted inputs. The attack surface shifts from code exploitation to cognitive exploitation.

2. Model Poisoning

If training data is compromised, the model itself becomes unreliable. Integrity moves upstream into data supply chains.

3. Data Exfiltration via Inference

Sensitive information can be extracted from models through carefully structured queries.

4. Shadow AI Deployment

Business units may integrate AI tools without security review, creating blind spots across the organization.

These are not theoretical concerns. They are operational realities.

The Accountability Shift

AI failure does not look like traditional breach events.

It can appear as:

- Biased automated decisions

- Hallucinated but authoritative outputs

- Improperly disclosed data

- Unintended regulatory violations

When these failures occur, the question will not be:

“Which tool misfired?”

It will be:

“Who was accountable for governance?”

This shifts AI security from a tooling discussion to a leadership discussion.

Boards and regulators will not distinguish between “innovation risk” and “security risk.”

They will view them as the same domain of oversight.

Why Many Security Teams Are Behind

Most security programs evolved in response to:

- Network compromise

- Ransomware

- Identity abuse

- Cloud misconfiguration

AI requires:

- Cross-functional governance

- Policy-layer integration

- Ethical risk consideration

- Model lifecycle oversight

- Data provenance validation

Security teams trained to think in terms of infrastructure must now think in terms of systems behavior and decision integrity.

That’s a different muscle.

And it hasn’t fully developed across the industry yet.

What Mature Organizations Are Building Now

Forward-leaning security leaders are already implementing:

- AI-specific threat modeling frameworks

- Red teaming against model behavior

- Controlled model access layers

- Logging and monitoring of AI interactions

- Formal AI governance committees

- Clear executive ownership

They are not waiting for regulation to force structure.

They are building internal structure first.

AI Security Is a Career Inflection Point

For security professionals, this moment represents leverage.

AI governance literacy is rapidly becoming a differentiator.

Future CISOs will need:

- Technical understanding of model vulnerabilities

- Governance frameworks for AI lifecycle management

- Communication skills to brief boards on AI risk

- Policy fluency as regulatory guidance evolves

Those who build competence now will lead the next security cycle.

Closing the Maturity Gap

The AI governance gap will not close through tooling alone.

It will close through:

- Exposure

- Structured frameworks

- Peer exchange

- Practical implementation guidance

For those actively exploring AI defense strategy, we’re collaborating with Packt around their upcoming AI Defense in Action workshop (Feb 21), which focuses on practical implementation of AI security and governance controls.

Our community has access to a 40% discount for those who find it relevant.

Regardless of events or workshops, the core issue remains:

AI deployment without governance is not innovation.

It is unmanaged risk.

The organizations that recognize this early will not just avoid failure.

They will build durable trust.